The last CLN release was terrible for really large nodes: the bookkeeper migrations were exponentially slow on Postgres, then the first call to bookkeeper would grind the node and even OOM it.

Some quickbfixes went in, and there will probably be another point release with those. Thanks to those who donated their giant accounting dbs!

But the exciting thing is all the optimization and cleanup work that came from me profiling how we handle this large data. Not just reducing the time (those single linked lists being abused!) which I've been spending the last weeks on, but last night I started measuring *latency* while we're under stress.

1. Anyone can spray us with requests: the initial problem was caused by bookkeeper querying a few hundred thousand invoices.

2. A plugin can spew almost infinite logs.

2. xpay intercepts every command in case xpay-handle-pay is set, so it can intercept "pay" commands.

Fixes:

1. Yield after 100 JSON commands in a row: we had logic for autoclean, but that was 250ms, not count based. We now do both.

2. Fix our I/O loop to rotate through fds and deferred tasks, so yield is fair.

3. Yielding on plugin reply RPC too, which definitely helps.

4. Hooks can now specify an array of filter strings: for the command hook, these are the commands you're interested in.

As a result, my hand-written sleep1/lightning-cli help test spikes at 44ms, not 110 seconds!

My next steps are to use synthetic data (say, 10M records to allow for future growth) and formalize latency measurements into our CI.

Next release looking amazing!

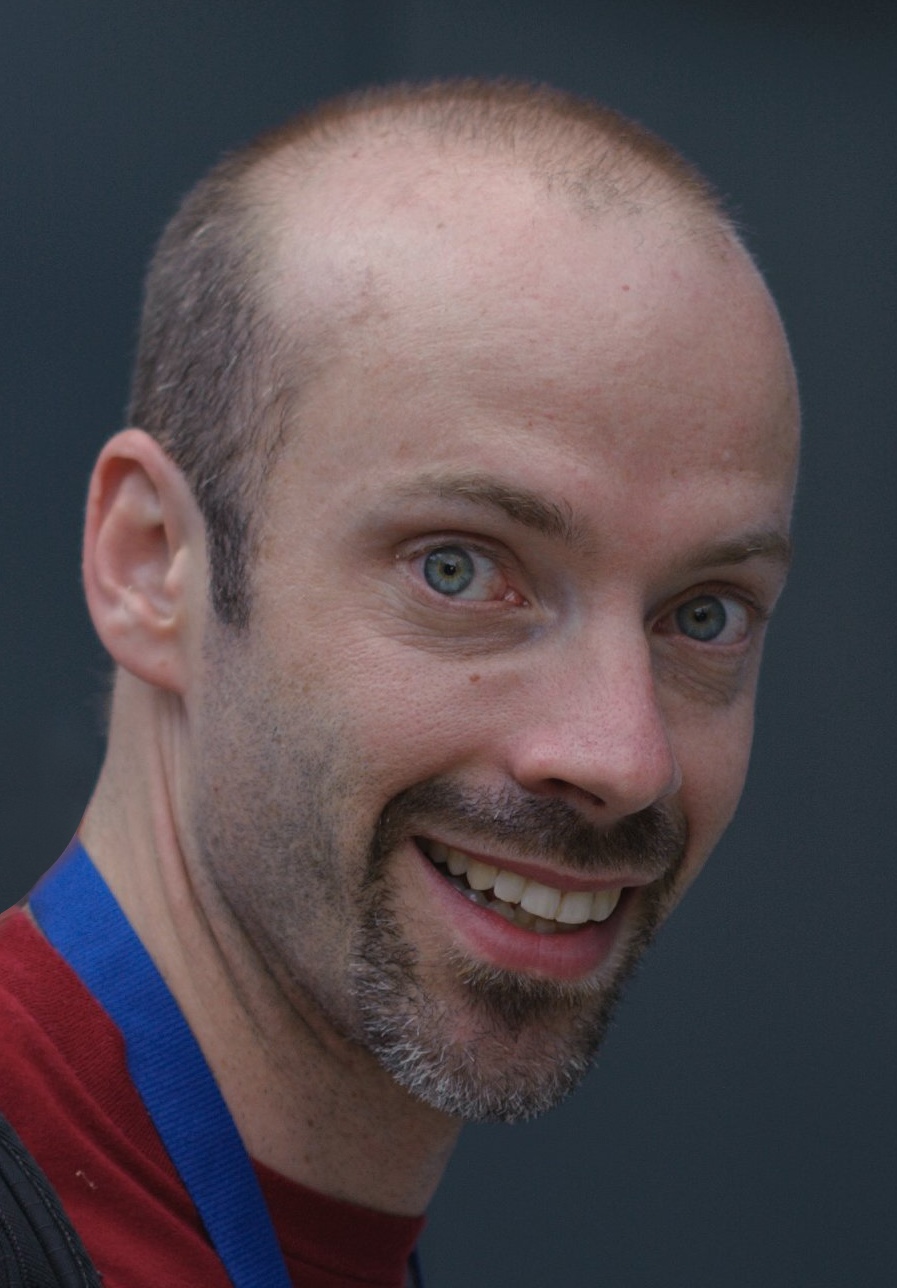

Rusty Russell

npub179…elz4s

2025-10-29 22:21:54 CET

Author Public Key

npub179e9tp4yqtqx4myp35283fz64gxuzmr6n3yxnktux5pnd5t03eps0elz4sSeen on

wss://sgl.rustcorp.com.auPublished at

2025-10-29 22:21:54 CETEvent JSON

{

"id": "682fab6c3eb2cce5d62d984a8fb45e4a082ae034a70537bcecac86d899dd6086",

"pubkey": "f1725586a402c06aec818d1478a45aaa0dc16c7a9c4869d97c350336d16f8e43",

"created_at": 1761772914,

"kind": 1,

"tags": [

[

"alt",

"A short note: The last CLN release was terrible for really large..."

]

],

"content": "The last CLN release was terrible for really large nodes: the bookkeeper migrations were exponentially slow on Postgres, then the first call to bookkeeper would grind the node and even OOM it.\n\nSome quickbfixes went in, and there will probably be another point release with those. Thanks to those who donated their giant accounting dbs!\n\nBut the exciting thing is all the optimization and cleanup work that came from me profiling how we handle this large data. Not just reducing the time (those single linked lists being abused!) which I've been spending the last weeks on, but last night I started measuring *latency* while we're under stress.\n\n1. Anyone can spray us with requests: the initial problem was caused by bookkeeper querying a few hundred thousand invoices. \n2. A plugin can spew almost infinite logs.\n2. xpay intercepts every command in case xpay-handle-pay is set, so it can intercept \"pay\" commands.\n\nFixes:\n1. Yield after 100 JSON commands in a row: we had logic for autoclean, but that was 250ms, not count based. We now do both. \n2. Fix our I/O loop to rotate through fds and deferred tasks, so yield is fair.\n3. Yielding on plugin reply RPC too, which definitely helps. \n4. Hooks can now specify an array of filter strings: for the command hook, these are the commands you're interested in.\n\nAs a result, my hand-written sleep1/lightning-cli help test spikes at 44ms, not 110 seconds! \n\nMy next steps are to use synthetic data (say, 10M records to allow for future growth) and formalize latency measurements into our CI.\n\nNext release looking amazing! ",

"sig": "f3e77995caad6073b9c10de73d72eb67602a8b5cd0bbd29344a01f6bba2ea0162cd232bf5deffabdf0890c1c4140dd2624cd0a2d6d3b7c462a1e3208311bd10b"

}